Colorado SB 21-169: playbook de cumplimiento para aseguradoras

Un playbook de cumplimiento paso a paso para Colorado SB 21-169. Inventario de modelos, pruebas cuantitativas de sesgo, supervisión de ECDIS, remediación y atestación anual para aseguradoras de vida, auto y salud, con un ejemplo trabajado.

Cómo regula hoy Colorado la IA en seguros

Durante casi cinco años, Colorado ha sido el estado que más importa para la regulación de IA en seguros en EE. UU., y 2026 es el año en que todo el peso de eso aterriza sobre las aseguradoras. Si tu negocio suscribe pólizas, tarifica riesgos, paga siniestros o diseña modelos para un asegurador con licencia en el estado, este post es para ti. Lo hemos escrito para que sea lo único que alguien del equipo de cumplimiento marque cuando le pregunten cómo llevar esto bien — no otra explicación de lo que dice el estatuto.

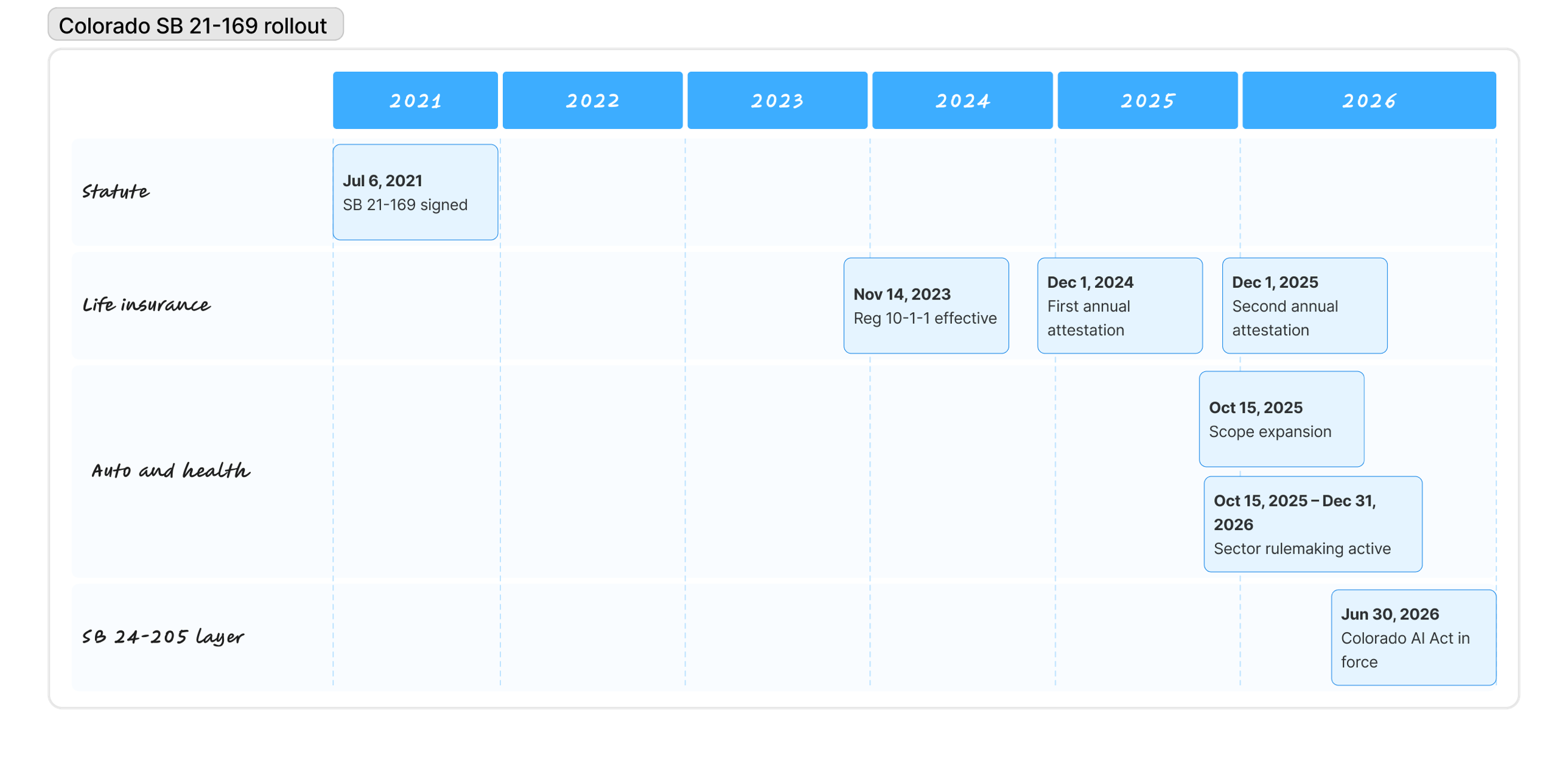

Tres cambios concretos han aterrizado en los últimos doce meses, y ninguno ha hecho mucho ruido fuera de los círculos especializados. El 15 de octubre de 2025, el régimen de pruebas de sesgo bajo el C.R.S. §10-3-1104.9 dejó de ser una regla solo para vida y pasó a ser también una regla para auto particular (private passenger auto) y planes de salud. La mayoría de las grandes aseguradoras de líneas personales están ahora en el alcance, y muchas aún no operan al estándar que la Division of Insurance espera. El 1 de diciembre de 2025, las aseguradoras de vida presentaron su segunda atestación anual bajo Regulation 10-1-1, lo que significa que la Division ya cuenta con dos años de datos, dos años de comparaciones y dos años de motivos para profundizar en presentaciones que parezcan sospechosamente limpias. Y en junio de 2026 entra en vigor el más amplio Colorado AI Act (SB 24-205), aplicado por el Attorney General en lugar de por la Division — un segundo canal de aplicación que cada asegurador con licencia en Colorado tiene que gestionar además de SB 21-169.

Este playbook recorre cómo es un programa SB 21-169 serio visto desde dentro. Cubre el estatuto y sus reglamentos de aplicación, los componentes operativos que necesitas levantar, los métodos cuantitativos que la Division ha llegado a esperar, una prueba de sesgo trabajada con una aseguradora de auto ficticia de tamaño medio, los modos de fallo comunes que aparecen en exámenes de market conduct, y cómo la ley se relaciona con los dos regímenes de IA adyacentes que tu equipo de cumplimiento también deberá satisfacer. Si solo necesitas la explicación corta, nuestra página de solución SB 21-169 la cubre. Si necesitas algo que puedas entregar al comité de riesgos, sigue leyendo.

Qué te piden el estatuto y el reglamento

La mayoría de los resúmenes de SB 21-169 difuminan una distinción que resulta importar bastante. La propia ley, firmada por el gobernador Polis en julio de 2021, es un estatuto habilitante. Vive en los Colorado Revised Statutes como C.R.S. §10-3-1104.9 bajo el título «Protecting Consumers from Unfair Discrimination in Insurance Practices», y su sustancia es corta. El estatuto prohíbe a las aseguradoras usar algoritmos, modelos predictivos o datos externos del consumidor si el resultado es una discriminación injusta contra una clase protegida — y encarga a la Colorado Division of Insurance escribir las reglas que dan a esas palabras significado operativo.

Esas reglas viven en Regulation 10-1-1, finalizada en septiembre de 2023 y en vigor desde el 14 de noviembre de 2023. Para el seguro de vida, 10-1-1 es hoy el libro de reglas vigente, y es de donde vienen todas las referencias a pruebas cuantitativas, gobernanza, documentación y atestación anual. Cuando un equipo de cumplimiento dice cumplimos SB 21-169, normalmente quieren decir que cumplen Regulation 10-1-1, porque el estatuto por sí solo es demasiado general como para cumplirlo directamente.

Para el auto particular y los planes de salud, la historia es otra. La expansión del alcance de octubre de 2025 los metió dentro del estatuto, pero el equivalente a Regulation 10-1-1 para auto y salud aún está en rulemaking a principios de 2026. La Division ha señalado que pretende seguir el mismo enfoque estructural que usó para vida, así que la mayoría de las aseguradoras construyen por defecto al estándar 10-1-1 esperando que las reglas sectoriales finales aterricen cerca. Es una apuesta defendible, pero sigue siendo una apuesta.

Cuatro términos en los que se apoya el reglamento

El estatuto y Regulation 10-1-1 usan cuatro piezas de vocabulario tan a menudo que nada más tiene sentido hasta que las fijas:

- ECDIS (External Consumer Data and Information Sources) es cualquier dato que la aseguradora no haya recopilado directamente del consumidor: puntuaciones de seguro basadas en crédito, historial de compras, señales telemáticas, datos de wearables, atributos geográficos, archivos de corredores externos, feeds de consorcio. La revisión de fuentes de datos de la Division es fundamentalmente una revisión de ECDIS.

- Predictive model cubre cualquier constructo estadístico o de machine learning que produzca una puntuación, una clase o una predicción que alimente una decisión de seguro. Modelos de árboles, GLMs, redes neuronales, stacks híbridos de reglas + modelos están todos en el alcance si influyen en un resultado regulado.

- Algorithm es aún más amplio. El reglamento lo usa para cualquier proceso computacional cuya salida informe una decisión de seguro — lo que significa que un motor de tarificación codificado a mano sin parámetros aprendidos puede calificar si dirige resultados consequential.

- Unfair discrimination es la frase para cuya prevención existe el reglamento. Significa trato diferencial o impacto dispar sobre una clase protegida no justificado por una base actuarial legítima, operacionalizado mediante el régimen de pruebas cuantitativas descrito más adelante en este post.

Quién aplica SB 21-169

La Colorado Division of Insurance aplica SB 21-169, una elección deliberada con consecuencias reales para cómo se construyen los programas de cumplimiento. La Division es la misma agencia que aprueba tus planes de tarificación, lleva las market conduct examinations y supervisa la operación diaria de tu negocio en el estado. Conoce tus formularios de pólizas, conoce tus prácticas de reservas, y tiende a hacer preguntas que un regulador general de IA nunca pensaría. Eso eleva la barra de evidencia más alto de lo que estaría en otro sitio. El personal comparará tus pruebas de sesgo con los memorandos de tarificación que has presentado, y notará las inconsistencias.

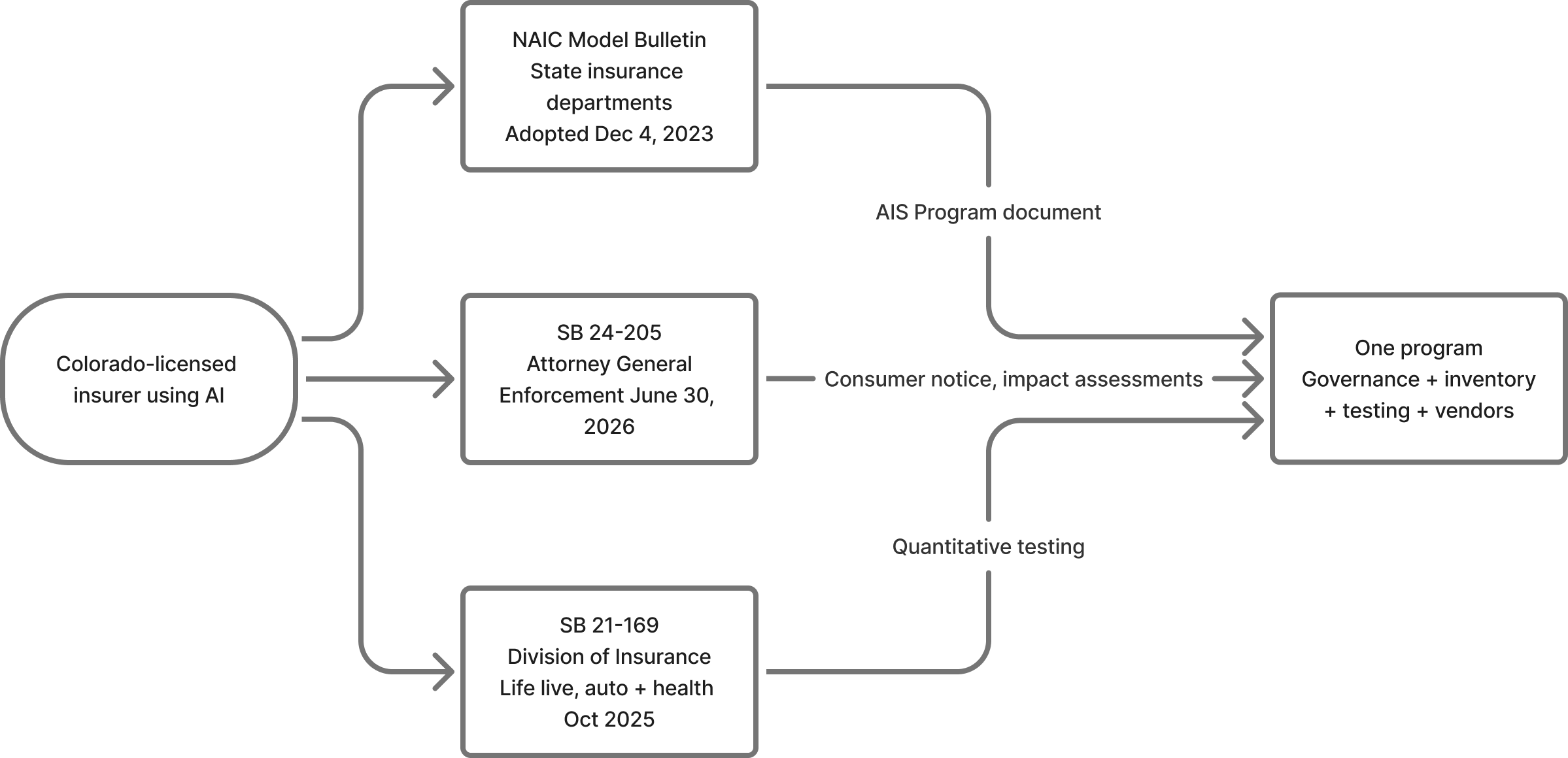

El Attorney General, en cambio, posee la aplicación de SB 24-205, el Colorado AI Act que se superpone a SB 21-169 para cada aseguradora con licencia en Colorado. Dos reguladores, dos estilos de aplicación, un mismo negocio. La tabla de comparación más abajo explica en qué difieren los regímenes; por ahora, ten presente que una aseguradora con licencia en Colorado que use IA tiene al menos dos relaciones de cumplimiento distintas que gestionar, y tres si también se cuenta el NAIC Model Bulletin.

Cómo se desplegó

El ritmo del despliegue ha sido deliberadamente desigual. Entre la firma de julio de 2021 y el lanzamiento de Regulation 10-1-1 en noviembre de 2023, la Division pasó dos años consultando con actuarios, defensores del consumidor y aseguradoras sobre cómo traducir la prohibición del estatuto en práctica medible. Las aseguradoras de vida presentaron entonces su primera atestación en diciembre de 2024, una segunda en diciembre de 2025, y rompieron la cadencia diez meses después cuando la expansión de alcance de octubre de 2025 metió a auto y salud en el estatuto antes de que sus reglas sectoriales estuvieran listas.

| Fecha | Evento |

|---|---|

| Julio 2021 | SB 21-169 firmada por el gobernador Polis |

| Noviembre 2023 | Regulation 10-1-1 en vigor para seguro de vida |

| Diciembre 2024 | Primera atestación anual de aseguradoras de vida presentada |

| Octubre 2025 | C.R.S. §10-3-1104.9 amplía alcance a auto particular y planes de salud |

| Diciembre 2025 | Segunda atestación anual de aseguradoras de vida presentada |

| 30 de junio 2026 | SB 24-205 entra en vigor y aplica a toda aseguradora con licencia en Colorado que use IA de alto riesgo |

El programa de cumplimiento en siete partes

Llevar un programa SB 21-169 es menos prosa de políticas y más disciplina operativa. La Division espera artefactos, no garantías, y esos artefactos vienen de siete componentes que se apoyan mutuamente. Un inventario sin gobernanza es solo una lista. La gobernanza sin pruebas es solo un comité. Las pruebas sin supervisión de proveedores dejan la mayor fuente de riesgo sin gestionar. Cada componente hace posible el siguiente, y cada uno alimenta evidencia hacia la atestación anual que cierra el ciclo.

Componentes del programa

- Construir el inventario. Cada algoritmo, modelo predictivo y feed ECDIS en alcance.

- Establecer la gobernanza. Políticas escritas, roles definidos, propiedad del senior management.

- Ejecutar pruebas cuantitativas. Análisis de impacto dispar a una cadencia defendible.

- Ejemplo trabajado: prueba trimestral de sesgo de Mesa Mutual sobre un factor de tarificación de auto.

- Supervisar proveedores y ECDIS. La obligación se queda con la aseguradora.

- Manejar pruebas fallidas. Acción correctiva, remediación, documentación.

- Presentar la atestación anual. Firma del senior management a la Division.

Parte 01: construir el inventario

Todo programa SB 21-169 creíble empieza con un inventario honesto de la superficie de IA y datos sobre la que descansan las decisiones de seguros. La mayoría de las aseguradoras encuentran su primer borrador incómodamente largo.

La pregunta de apertura de un examinador es casi siempre alguna variante de «enséñennos lo que tienen.» Una aseguradora que no puede responder concretamente le ha dicho efectivamente a la Division que su programa aún no existe. El inventario es a la vez el cimiento y el artefacto que la Division querrá comparar con tus presentaciones de tarificación.

Para cada entrada, los campos que importan son los obvios más los que atan el registro de vuelta al resto del programa:

- Purpose: la decisión sobre la que influye el sistema (suscripción, tarificación, siniestros, fraude, marketing, retención).

- Fuentes de datos: cada entrada que el sistema consume, marcando cuáles cualifican como ECDIS.

- Owner: un humano con nombre y autoridad para tomar decisiones sobre el sistema. No «el equipo de datos».

- Atribución de proveedor: de dónde vienen el modelo o los datos y bajo qué contrato, enlazado al texto del contrato.

- Risk tier: cuánto daño al consumidor puede causar el sistema, para que la cadencia de pruebas escale en consecuencia.

- Testing status: la última prueba de impacto dispar ejecutada sobre el sistema y su resultado.

- Lifecycle stage: en desarrollo, en producción o en retirada.

Una hoja de cálculo funciona para un piloto y se convierte en un pasivo en el año dos, porque el inventario es un registro vivo que cambia cada vez que un proveedor lanza una actualización y tiene que mantenerse enlazado a resultados de pruebas, incidentes y atestaciones. La mayoría de las aseguradoras a escala usan un model inventory estructurado — alrededor del cual está construido VerifyWise — pero la herramienta importa menos que la disciplina de mantener la lista actualizada y conectada.

El fallo más peligroso es silencioso. Los feeds ECDIS como datos de telemática, imágenes aéreas, puntuaciones de consorcios antifraude de terceros y atributos demográficos añadidos por brokers de datos tienden a llegar por equipos operativos que no se ven a sí mismos como propietarios de modelos. Acaban fuera del inventario salvo que alguien los busque específicamente. Esa es la brecha que la revisión de fuentes de datos de la Division está diseñada para encontrar.

Parte 02: establecer la gobernanza

Regulation 10-1-1 es inusualmente clara en que un programa SB 21-169 es un programa de senior management, no técnico. Las tres primeras peticiones de un examinador durante una revisión de market conduct son tus políticas escritas, tus roles documentados y las minutas de tu comité de gobernanza. Una aseguradora que no pueda producirlos a demanda ha confirmado efectivamente que el programa es informal — lo que en sí mismo es un finding.

La capa de gobernanza tiene cuatro ingredientes:

- Políticas escritas que cubren la adquisición, validación, despliegue, monitorización y retirada de modelos, algoritmos y ECDIS, con aprobación del senior management o del consejo registrada con fecha.

- Un mapa de roles con personas nombradas en actuariado, ciencia de datos, suscripción, cumplimiento, legal y TI — cada una con autoridad claramente delegada en vez de asumida.

- Un comité transversal, normalmente mensual o trimestral, donde las decisiones sobre modelos de alto impacto se toman y registran en lugar de tratarse en hilos de correo que se desvanecen en buzones archivados.

- Supervisión continua, lo que significa que el senior management permanece en el bucle sobre resultados de pruebas, incidentes, remediaciones y cambios de proveedores durante todo el año, no solo en el momento de la atestación.

El fallo de gobernanza más común que vemos no es ausencia sino vaguedad estratégica. Una política que dice los modelos se validarán regularmente es peor que ninguna política, porque crea una obligación explícita que la aseguradora no puede demostrar haber cumplido — y entrega a la Division una línea directa de interrogatorio. Las buenas políticas especifican quién valida, con qué cadencia, usando qué metodología, contra qué umbral y con qué consecuencia cuando se cruza el umbral. Nuestro módulo risk management codifica eso como un marco estructurado con evidencia ligada a cada control, pero el mismo rigor puede producirse sobre papel.

Parte 03: ejecutar pruebas cuantitativas

El régimen de pruebas es lo que distingue a SB 21-169 de otros marcos de gobernanza de IA. Donde otros regímenes piden pruebas como obligación amplia, Regulation 10-1-1 pide pruebas cuantitativas con métodos estadísticos específicos sobre datos que la Division puede inspeccionar.

Qué prueba la Division. El objeto es el impacto dispar, no el trato dispar. Nada gira en torno a si tus modelos usan raza como entrada; el sector dejó de hacer eso hace mucho. La pregunta es si la salida produce tasas materialmente diferentes entre clases protegidas tras contabilizar la variación actuarial legítima. Las clases protegidas bajo 10-1-1 incluyen raza, color, origen nacional, religión, sexo, orientación sexual, discapacidad, identidad de género y expresión de género. La edad se trata por separado bajo las reglas de tarificación existentes.

La regla de los cuatro quintos. Para cada clase protegida, calcula la selection rate, que es la proporción de solicitantes en esa clase que reciben el resultado favorable. Divide la selection rate de la clase minoritaria por la de la clase de referencia para obtener el impact ratio. Un ratio de 0,80 o superior está dentro de la tolerancia; cualquier cosa por debajo es un finding que exige explicación, justificación o remediación.

Las matemáticas

- Selection rate = resultados favorables en la clase ÷ total de solicitantes en la clase

- Impact ratio = selection rate de la clase X ÷ selection rate de la clase de referencia

0,80 o superior: dentro de la tolerancia. Por debajo de 0,80: un finding que la Division espera que expliques, justifiques o remedies.

El problema de los datos de raza y BISG. Las aseguradoras no recogen raza directamente y no pueden empezar a hacerlo. Regulation 10-1-1 espera que las aseguradoras usen proxies probabilísticos validados. El defecto es Bayesian Improved Surname Geocoding (BISG), que combina apellido y ubicación geográfica para estimar una distribución de probabilidad sobre categorías raciales. La Division ha aceptado BISG en las atestaciones hasta ahora, con la salvedad de que las aseguradoras documenten cómo han validado el proxy contra su propia cartera. Los resultados de BISG vienen con intervalos de confianza, no estimaciones puntuales — y decir usamos BISG es un punto de partida, no una respuesta.

Cadencia y disparadores. Regulation 10-1-1 no prescribe una frecuencia. Las aseguradoras de vida se han asentado en un ritmo trimestral con una revisión anual más profunda ligada a la atestación de diciembre; las aseguradoras de auto y salud están siguiendo mayoritariamente la misma pauta. Cualquier cambio material de modelo, cambio de datos o revisión de tarifas dispara su propia prueba — porque esperar al siguiente ciclo trimestral para descubrir que un cambio empujado ha producido un nuevo impacto dispar es exactamente el tipo de brecha de gobernanza que la Division busca.

Parte 04: ejemplo trabajado para Mesa Mutual

Una prueba de impacto dispar es más fácil de entender una vez que ves una ejecutada de principio a fin.

Conoce a Mesa Mutual

Una aseguradora de auto ficticia de tamaño medio que suscribe en Colorado, con aproximadamente 180.000 pólizas. Su equipo de tarificación ha afinado un factor de tarificación basado en crédito, y el programa SB 21-169 exige una prueba de impacto dispar antes de que el nuevo factor entre en producción.

Mesa toma las solicitudes de negocio nuevo del último trimestre que se habrían tarificado bajo el nuevo factor: 42.000 solicitantes. El resultado favorable es ser colocado en el tier preferente estándar en lugar de en un tier de mayor precio. Mesa ejecuta BISG contra apellidos y códigos ZIP, trata a un solicitante como una sola clase cuando BISG asigna una probabilidad superior a 0,80, y marca el resto como mixto.

Los solicitantes Native Hawaiian y Pacific Islander (0,8 %) caen por debajo del umbral de exclusión del 2 % y se eliminan del cálculo de ratio.

| Clase | Solicitantes | Tier preferente | Selection rate |

|---|---|---|---|

| White (referencia) | 22.100 | 15.250 | 69,0 % |

| Hispanic | 8.400 | 4.750 | 56,5 % |

| Black | 3.900 | 2.050 | 52,6 % |

| Asian | 2.800 | 2.100 | 75,0 % |

| American Indian / Alaska Native | 960 | 560 | 58,3 % |

| Clase | Impact ratio vs referencia | Pass o Fail |

|---|---|---|

| Hispanic | 56,5 / 69,0 = 0,82 | Pass |

| Black | 52,6 / 69,0 = 0,76 | Fail |

| Asian | 75,0 / 69,0 = 1,09 | Pass |

| American Indian / Alaska Native | 58,3 / 69,0 = 0,85 | Pass |

Finding

Impact ratio de 0,76 para solicitantes Black, por debajo de 0,80. Bajo Regulation 10-1-1, este es un finding que Mesa no puede ignorar.

El comité de gobernanza de Mesa considera tres opciones: justificar el factor actuarialmente, remediar ajustando el plan de tarificación o retirar el factor. Elige remediación: reducir el peso del factor de crédito y añadir un factor de suscripción compensatorio que las pruebas empíricas sugieren que cierra la brecha sin degradar la precisión predictiva.

Dos semanas más tarde, Mesa vuelve a ejecutar la prueba sobre el plan ajustado. El ratio de la clase Black pasa de 0,76 a 0,83; Hispanic pasa de 0,82 a 0,87. Mesa documenta el finding inicial, la justificación del comité, el ajuste, el resultado del retest y la firma final. Todo eso entra en el testing log, enlazado a la entrada de inventario, y aparece en la atestación del 1 de diciembre como un finding documentado con una remediación limpia.

El punto no son las matemáticas, que son sencillas una vez resuelto el paso del proxy. El punto son los artefactos. Un programa que solo produce el plan de tarificación revisado final no tiene nada que mostrar a la Division. Un programa que produce el testing log, la minuta del comité, la evidencia del retest y la entrada de inventario revisada sobrevive al examen sin estrés.

Parte 05: supervisar proveedores y ECDIS

El error más caro que vemos en los programas SB 21-169 es asumir que la diligencia con el proveedor transfiere la responsabilidad legal. No lo hace. Si el modelo de un proveedor produce un impacto dispar en tu cartera, el finding es contra ti.

Eso produce tres obligaciones operativas:

- Diligencia pre-onboarding que registra, por escrito, el enfoque del proveedor sobre sesgo, metodología de pruebas y linaje de datos. Una certificación del proveedor es evidencia, no un sustituto de la diligencia propia de la aseguradora.

- Cláusulas contractuales con dientes: derechos de auditoría, acceso a datos de pruebas, cooperación con consultas regulatorias, derechos de rescisión ligados a fallos de cumplimiento. Los acuerdos estándar rara vez incluyen algo de esto.

- Monitorización continua. Los modelos de proveedor se actualizan, los feeds de datos derivan, y un sistema de proveedor que pasó hace seis meses puede fallar la próxima prueba sin ningún cambio por parte de la aseguradora.

La cadena de herencia recorre MGAs, TPAs y reaseguradoras. Una MGA que suscribe negocio sobre tu papel opera bajo tu programa. Un TPA que ejecuta triaje de siniestros en tu nombre maneja las decisiones reguladas que cubre el estatuto. La pregunta nunca es si un proveedor está dentro o fuera del límite de la empresa; es de quién es el programa que posee la evidencia cuando un examinador de la Division pregunta. Nuestro módulo vendor management está construido alrededor de esa pregunta, pero cualquier enfoque que mantenga la supervisión de proveedores conectada al motor de pruebas en lugar de aparcada en reuniones de compras cumple el estándar.

Parte 06: manejar pruebas fallidas

Regulation 10-1-1 no prohíbe los findings de impacto dispar. Prohíbe ignorarlos.

Cuando una prueba falla, hay tres caminos abiertos:

- Justificación actuarial: mostrar que el factor refleja una experiencia de pérdida genuina y que ninguna alternativa menos discriminatoria logra el mismo objetivo actuarial.

- Remediación: ajustar el modelo, los datos, el plan de tarificación o la regla hasta que el ratio vuelva a la tolerancia. Retest y documentar.

- Retirada: sacar el sistema del servicio cuando ni la justificación ni la remediación son factibles.

El requisito de documentación es el mismo en los tres. La Division quiere ver la prueba fallida, la justificación de la decisión, la acción tomada y la evidencia de que la acción funcionó.

El flujo de trabajo se parece estructuralmente a la gestión de incidentes: un finding abre un caso, la gobernanza lo revisa, un owner ejecuta la respuesta, un retest o una justificación lo cierran. Nuestro módulo incident management codifica ese flujo y ata cada finding de vuelta al model inventory y al testing log. Las aseguradoras que manejan los findings por hilos de correo invariablemente los pierden de vista.

Parte 07: presentar la atestación anual

La atestación anual confirma que existe un programa, que está operando y que produce la evidencia que pide el reglamento. Para las aseguradoras de vida, la fecha límite es el 1 de diciembre de cada año. Las aseguradoras de auto y salud deberían esperar una cadencia similar una vez se finalicen las reglas sectoriales.

La firma recae en el senior management, normalmente el CRO, el CCO o equivalente. La Division espera que el firmante haya revisado genuinamente la presentación.

Una atestación defendible confirma que:

- Existe un marco de gobernanza escrito y está aprobado al nivel adecuado.

- El inventario de modelos está actualizado.

- Las pruebas cuantitativas se ejecutaron a la cadencia requerida en los modelos y fuentes de datos dentro del alcance.

- Los findings durante el año se han registrado y remediado.

- Cualquier cambio material en el programa está documentado.

Una atestación delgada normalmente ofrece una declaración general de cumplimiento sin los artefactos subyacentes. La Division ha indicado que hará un seguimiento más agresivo de las presentaciones que parezcan ligeras. Esconder findings es peor que reportarlos: un año sin findings de impacto dispar sobre una cartera grande es estadísticamente inverosímil y tiende a invitar el escrutinio que la aseguradora intentaba evitar.

Doce preguntas que hará un examinador

Uno de los ejercicios más útiles que cualquier aseguradora puede ejecutar antes de su primer market conduct exam bajo 10-1-1 es una prueba simple: ¿puede el programa producir, a demanda, cada uno de los siguientes artefactos dentro de un día laboral? Una aseguradora que pase diez de doce está en buena forma. Una que pase menos de ocho debería tratar la brecha como la prioridad de corto plazo.

Checklist del día del examen

- El marco de gobernanza escrito vigente, con la aprobación del senior management registrada.

- Un inventario de modelos actualizado que cubra cada algoritmo, modelo predictivo y ECDIS usado en prácticas reguladas.

- El purpose, owner, fuentes de datos, risk tier y atribución de proveedor de cada modelo.

- Testing logs de cada modelo en alcance, cubriendo el periodo actual y el anterior.

- La metodología de proxy usada para la identificación de clase protegida, con registro de validación.

- Un registro completo de cada finding de impacto dispar, la justificación de la decisión, la acción correctiva y el resultado del retest.

- Minutas del comité de gobernanza del periodo, mostrando supervisión activa de los findings.

- Registros de due diligence de proveedor para cada modelo de terceros o feed ECDIS en uso.

- Contratos con proveedores que contengan derechos de auditoría, obligaciones de cooperación y cláusulas de monitorización continua.

- La atestación anual presentada a la Division y la evidencia de soporte detrás.

- Un change log para cualquier actualización material en los sistemas en alcance durante el periodo y cómo se probó cada cambio.

- Evidencia de que las quejas del consumidor y los reportes de resultados adversos ligados a decisiones algorítmicas se investigaron y resolvieron.

SB 21-169 dentro de la pila IA de Colorado en sentido amplio

SB 21-169 no opera en aislamiento. Toda aseguradora con licencia en Colorado que use IA está también bajo el NAIC Model Bulletin si su estado de origen lo ha adoptado, y todas estarán bajo SB 24-205 a partir del 30 de junio de 2026. Tres regímenes, tres reguladores, un negocio.

| Dimensión | SB 21-169 | SB 24-205 (Colorado AI Act) | NAIC Model Bulletin |

|---|---|---|---|

| Alcance | Solo seguros. Vida hoy, auto + salud desde oct 2025 | Cualquier IA de alto riesgo en decisiones consequential, en cualquier sector | Solo seguros. Cualquier IA usada por aseguradoras |

| Estatuto en vigor | Desde julio 2021 | Aplicación desde el 30 de junio de 2026 | Plantilla adoptada el 4 dic 2023; adopción estado a estado |

| Regulador | Colorado Division of Insurance | Colorado Attorney General | Departamentos estatales de seguros que adopten el bulletin |

| Obligación principal | Pruebas cuantitativas para unfair discrimination en algoritmos, ECDIS y modelos predictivos | Deber de diligencia, impact assessments, notificación al consumidor, affirmative defense | AIS Program escrito: gobernanza, gestión de riesgos, pruebas, supervisión de proveedores |

| Requisito de pruebas | Cuantitativo, con metodología documentada | Menos prescriptivo. Impact assessments y gestión de riesgos | Pruebas para errores y unfair discrimination, proporcional al daño |

| Presentación anual | Sí. 1 de diciembre para aseguradoras de vida | Ninguna. Impulsada por la aplicación | Ninguna. Impulsada por exámenes |

| Periodo de subsanación | Ninguno | 60 días | Ninguno |

| Modelo de aplicación | Market conduct exam más acción administrativa | Aplicación del Attorney General con affirmative defense | Autoridad existente de market conduct bajo la adopción de cada estado |

Un programa SB 21-169 serio ya cubre la mayor parte de SB 24-205 y del NAIC bulletin. Gobernanza, inventario, supervisión de proveedores y gestión de incidentes son efectivamente idénticos. SB 24-205 añade notificación al consumidor y un documento formal de impact assessment; el NAIC bulletin añade un documento AIS Program en un formato específico. Ambas adiciones son incrementales en lugar de construcciones paralelas. Para los tratamientos más amplios, nuestra página Colorado AI Act cubre SB 24-205, y nuestra página NAIC AI Principles recorre el Model Bulletin cláusula por cláusula.

Qué podría aún cambiar en 2026

Tres desarrollos podrían mover el suelo. Ninguno es razón para retrasar el programa.

- Rulemaking de auto y salud. Se están redactando reglas sectoriales equivalentes a 10-1-1. Espera borradores para comentarios públicos durante 2026. Las reglas finales probablemente seguirán de cerca a 10-1-1, pero los umbrales específicos y las cadencias de presentación podrían diferir.

- SB 24-205 en vigor. El 30 de junio de 2026 pone el Colorado AI Act en vigor. Las aseguradoras que ya ejecutan programas SB 21-169 se enfrentan a trabajo incremental modesto: notificación al consumidor, documentación de impact assessment, evidencia de affirmative defense.

- Litigio. xAI ha demandado a Colorado por SB 24-205 por motivos de la Primera Enmienda. El caso apunta específicamente a SB 24-205; SB 21-169 no está en el litigio. Un desafío exitoso dejaría intacto el régimen de la Division pero cambiaría el entorno político alrededor de las reglas de IA de Colorado más en general.

La primera semana de un programa serio

Una implementación completa de SB 21-169 es una construcción de varios trimestres para una aseguradora que parte de cero. La primera semana, sin embargo, es sorprendentemente simple. Cinco acciones la cubren:

Cinco acciones para la primera semana

- Reunir el inventario. Compón la primera lista borrador de cada algoritmo, modelo predictivo y feed ECDIS actualmente en uso a lo largo del negocio, incluyendo cada sistema suministrado por proveedor.

- Asignar un owner nombrado a cada entrada. Un ser humano con autoridad para tomar decisiones sobre ese sistema. Si no se puede nombrar a un owner, el sistema no está listo para permanecer en alcance.

- Elegir una metodología de proxy y documentarla. BISG es el defecto. Escribe el método, las entradas, las limitaciones y el plan de validación para la propia cartera de la aseguradora.

- Ejecutar una prueba real de impacto dispar. Elige el sistema de mayor impacto del inventario, tira las solicitudes del último trimestre, ejecuta la prueba y registra el resultado honestamente.

- Meter al senior management en el bucle. Comparte el inventario borrador y el primer resultado de prueba con quien acabará firmando la atestación anual. Su reacción indica cuánta gobernanza queda aún por construir.

Ninguna de estas cinco acciones requiere una plataforma, un compromiso de consultoría o un gasto inicial significativo. Requieren disciplina y honestidad sobre el estado del programa.

La mayoría de las aseguradoras a escala convergen finalmente en las mismas necesidades operativas: un model inventory estructurado, una capa de supervisión de proveedores, un motor de pruebas que registre metodología y resultados, un flujo de incident management para pruebas fallidas y un marco de compliance que ensamble el paquete de evidencia a demanda. VerifyWise está construido alrededor de esa forma y lo usan hoy aseguradoras que ejecutan programas SB 21-169 — pero la cuestión del tooling es para el mes tres, no para la semana uno.

Fechas para poner en el calendario de cumplimiento

Tres fechas durante los próximos dieciocho meses determinarán cuánto margen de marcha tiene tu programa.

Fechas clave

- Primer semestre de 2026: se esperan borradores de reglas sectoriales de auto y salud por parte de la Colorado Division of Insurance. Los periodos de comentarios serán oportunidades reales para moldear especificidades.

- 30 de junio de 2026: SB 24-205 entra en vigor y aplica a toda aseguradora con licencia en Colorado que use IA de alto riesgo.

- 1 de diciembre de 2026: atestación anual de aseguradoras de vida bajo Regulation 10-1-1. Las aseguradoras de auto y salud deberían esperar una cadencia similar una vez se finalicen las reglas sectoriales.

Si tu programa está retrasado, el camino hacia adelante es más corto de lo que parece. La Division no espera perfección en la primera atestación, y las aseguradoras que llegan con un programa genuino y findings honestos tienden a tener conversaciones productivas en vez de acciones de aplicación. Si quieres un sanity check sobre dónde está tu programa, ponte en contacto o empieza una evaluación de cumplimiento.

Sobre el equipo de VerifyWise

VerifyWise desarrolla software de gobernanza de IA con código disponible (source-available) utilizado por organizaciones para gestionar riesgos, cumplimiento y supervisión en sus carteras de IA. Nuestro equipo editorial se basa en experiencia práctica implementando flujos de trabajo de gobernanza para industrias reguladas y equipos de IA en rápido crecimiento.

Más información sobre VerifyWise →¿Listo para gobernar su IA de manera responsable?

Comience hoy su viaje de gobernanza de IA con VerifyWise.